VeSecurity

(VeSecurity is a fictious name as the company prefers their name to not be published (for security reasons :P))

LeSS Adoption for a Safety & Security Management Product

Introduction and Context

The product in question is a solution that allows managing the safety and security aspect of a big area such as a mall or an airport. It integrates different elements such as video cameras, door sensors, smoke detectors and more. By monitoring these, the security departments can do efficient real-time incident management such as for a security breach or a fire.

The original group structure was

- 5 component teams responsible for developing different components of the system.

- 5 manual testing teams, each team was paired with a component team.

- 3 test-automation teams - two teams creating and maintaining system level functional tests, and the third team was responsible for automated non-functional testing.

The teams were located in two sites, in different countries but in the same timezone.

In site A there were 4 development teams, 4 testing teams, Non functional automation testing team and a functional system test automation team, The other teams were on site B.

All component teams had their own team manager. The testing teams were all managed by the same manager, with a lead tester in each team. There was a single product manager.

The lifecycle was sequential, including a 3-month planning phase for each annual release.

There was also a command and control culture. For example:

- The work was assigned to the team members by their managers

- Effort estimate was done by the team managers.

- Every design had to be reviewed by the team manager. The test design required approval from the QA manager.

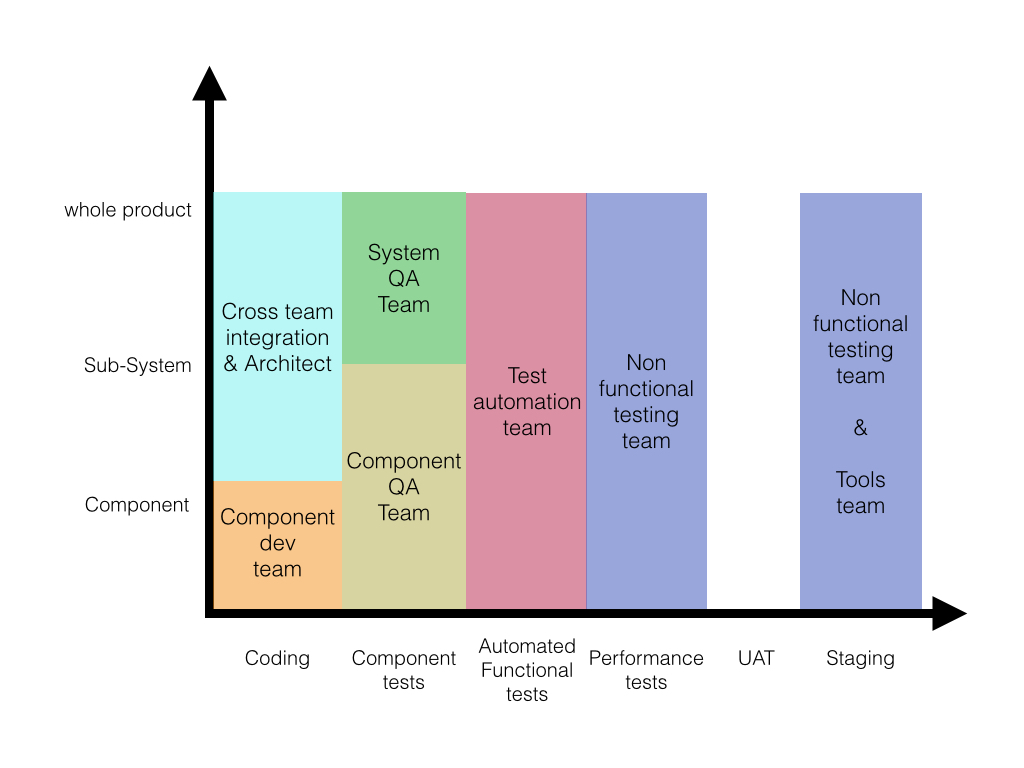

The following chart is a Feature-Team Adoption Map (a tool used in LeSS adoptions) that illustrates the original state of the teams, with respect to scope of tasks and scope of architectural components. The white/blank areas represent those that had no one responsible.

Motivation for change

When the customer approached us, the main challenges they were facing are the common problems we often see in organizations: low quality, no visibility of real progress, difficulty dealing with changes, and low customer satisfaction. There was a willingness to try a significant change to create a much better system, and so we helped the group adopt large-scale Scrum (LeSS).

The First Experiment: One Feature Team and Scrum

Following a short learning and observation period, we conducted a management workshop to share our learnings and recommendations.

Although we would have liked to create a fully cross-functional and cross-component feature team, we knew that for the first step this would be challenging, because of limited skills. For example, the group had only one technical writer. Immediately including her in the one new feature team would create a problem, because she still had writing responsibilities across the entire (traditional) group. This conflict is inevitable when there’s a one-team-incremental step towards feature teams surrounded by a still-traditional group. So the Definition of Done for this single team was imperfect; it excluded customer documentation, for example.

And so because of the extremely cautious nature of the client (regarding change) and the challenge to have all of the needed skills for creating a shippable product as described above, we recommended to start with a single ‘weak’ feature team that excluded performance testing, test automation, and technical writing. We were applying the LeSS experiment described in Practices for Scaling Lean & Agile Development, called Try…Transition from component to feature teams gradually. We advised timeboxing the pilot step until the end of the current release (3 months in the future), hoping that success with this one feature team would result in enough buy-in from management to broaden and deepen the adoption to LeSS.

To start with we had to create a Scrum feature team, and identified the following people:

- a Scrum Master

- the managers chose him (sigh… command and control culture)

- a Product Owner

- the product manager agreed to take on this role

- diverse Team Members to create a cross-component and cross-functional team, excluding skills for performance testing, test automation, technical writing.

- the existing team managers chose them

We moved the new ‘weak’ feature team to a big room so that everyone could sit together.

Initial Product Backlog Creation and Definition of Done

Following this preparation, we conducted a Scrum training for the new team, Product Owner, and the managers from the organization, and immediately after that we performed a 2-day Product Backlog creation workshop with the team and Product Owner.

The first thing we did in this workshop was to define the Definition of Done (DoD), which was:

- coded

- code reviewed

- code committed to the main branch

- all manual testing complete

- all automated tests integrated into the build system

- maximum defects (0 critical, 1, major, 1 medium, 4 minor)

A note about the defect policy in the Definition of Done: It is common when transitioning to Scrum to introduce a zero defect policy. But this specific group, given their history of quality problems, felt that having a zero defect policy was too overwhelming for them. Still, we wanted to somehow limit the number of defects in a way that reduced the risk and hopefully limited the number of “stabilization Sprints” to one. But, yeah, yuck!

In addition, the group discussed some of the likely content for the next 3-month period. The team raised many questions regarding the features, and some major changes to the content emerged during this workshop.

The First Sprint

As is common in Scrum, since it reveals weaknesses, the first Sprint ended without any items ‘done’. In the retrospective the team identified that the major reasons for not getting any item to done was:

- Lack of test automation.

- Lack of progress visibility.

- Items were too big.

In addition to deciding to create smaller items, the team decided to learn about and write the automated tests themselves when the separate test-automation team was not available to help in time.

The Next Three Months

Over the following months the single pilot feature team slowly learned, improved, and was able to deliver better. Perhaps more importantly, the management was able to see that most of the impediments for the team were not in the team itself per se, but in the old organization that was defined and maintained by the management.

Scaling to Multiple Feature Teams and LeSS

After a period of about 3 months, the level of satisfaction from the transition to even a weak feature team and Scrum was extremely high, and so a management decision was made to perform a product wide transition.

This is the point in the adoption where a Scrum adoption became a large-scale Scrum (LeSS) adoption.

As recommended in a LeSS adoption, as a first step we focused on education. All of the employees went through a two-day agile training and all of the managers and Scrum Masters went through a 1-day workshop on LeSS. This workshop was based on ideas and experiments from the first two LeSS book: Scaling Lean & Agile Development, and Practices for Scaling Lean & Agile Development. The workshop included topics such as systems thinking, waste, root cause analysis, and more.

We expanded the DoD to include all documentation.

At this time the “Undone” still included unit testing, full regression automated, stress/performance tests.

The next step was to change the organizational structure so that it fit the new DoD. The group was reorganized into 7 feature teams: 5 at one site, and 2 at the other.

There was also a division by site into major feature areas: the 5-team site focused on normal user features, and the 2-team site focused on back-office and administration features.

The test-automation team was dissolved; some of the members joined the feature teams, and others joined the performance-testing team.

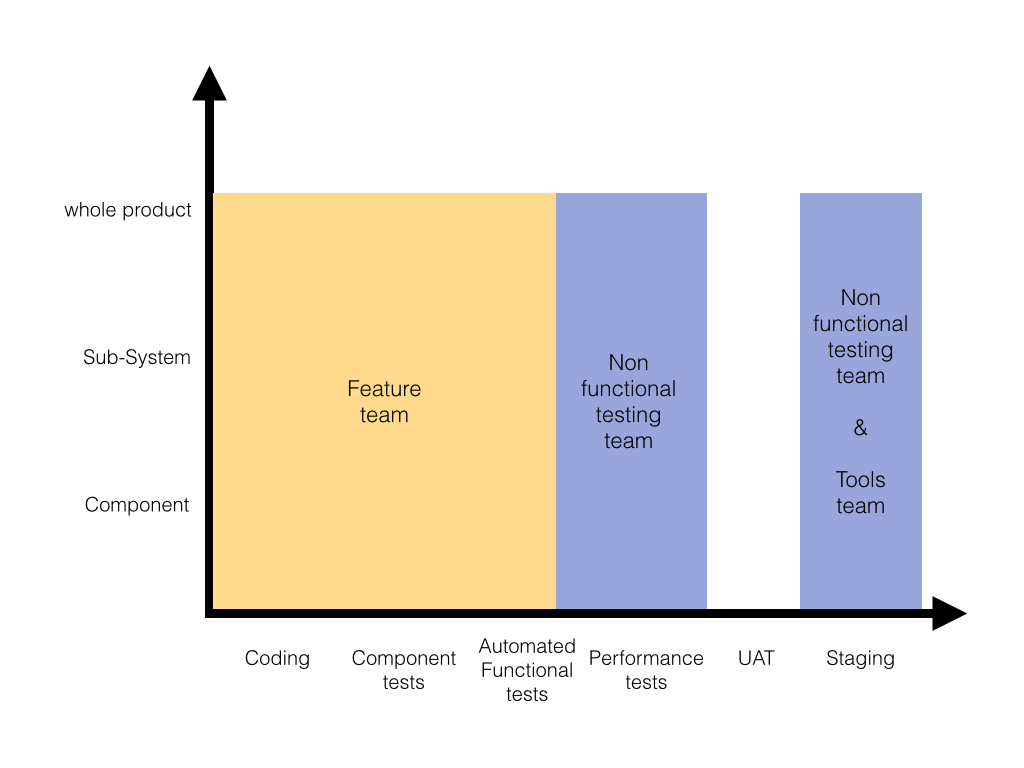

The structure is illustrated in the chart below.

*The blank (white) areas represent areas that had no one responsible for.

As expected, the change introduced many challenges, here are examples of challenges we faced while still planning the re-organization:

- Since the team members did not have an available communication channel to the users or enough business understanding to be able handle the clarification of the requirements, and the real PO did not have enough time. We made the mistake of putting two system architects in the role of intermediate requirement analysts (a position which we knew should not exist in Scrum), instead of solving the root cause problem by connecting teams with the true requirement donors.

- The previous common-platform group had only 3 members and a team lead, which was not enough to be distribute experts to each new team. Our experiment was to nominate the platform leader as an internal consultant without being a part of any team, with the role to support the teams when they needed help.

- Some of the prior team leaders were not considered a good fit for the Scrum Master role. The agreed experiment, given the politics of the organization, was to nominate a few of them as Scrum Masters and gradually coach them in their new role, although we recognized this was unlikely to turn out well, as it seems ex-managers and ex-team-leads rarely make good Scrum Masters.

- The office layout had small rooms for 2-4 people and we were not able to have all team members seat at the same rooms. The experiment was to give each team adjacent rooms.

The First Two Sprints

The beginning was extremely difficult! There was a lot of confusion and resistance with regards to the change. The following are challenges we encountered, because we were new to adopting Scrum and scaling, didn’t realize the implications of our initial mistakes, and lacked the proper support from senior managers to truly change the power relationships, roles, and responsibilities.

- The so-called Scrum Masters (formerly team leaders, but still command-and-control managers) were not good. There was no support for self-organizing teams, or giving up direction-control to the Product Owner. Rather, they commanded the teams, turned the Daily Scrum into a status report, and assigned tasks. It was Scrum farce.

- Teams were new and team maturity was low, leading to difficulty in decision making, fear of error and a desire for high predictability. Combined with the fake Scrum Masters (managers), this caused a decrease in employee motivation.

- Because of previous expertise and skills, especially for the testers, teams were working in min waterfall inside the iteration and not in a real Scrum process.

- Coordination and collaboration between teams was lacking, each team was very much focused on itself, missing having a whole-product focus, This led to teams creating problems for each other which resulted in daily conflicts.

- There was only one equipment-testing lab and the fact that teams now test more often created a constrained-resource queue that introduced major delays.

- The QA manager was confused regarding her role since it was not clear what is her responsibility and what value does she add. One one hand she was still in charge on the non-functional testing team, but that was only one team, and having a manager for a one team group seemed wasteful to her.

- Most teams did not have all of the knowledge to be able to plan and execute a complete end-to-end feature, this created delays and bottlenecks. This also made the planning and design meetings very long and difficult.

- It was very difficult to achieve proper clarification of items, since teams weren’t talking directly to users, customers, and other real requirement donors. The Product Owner and “requirement analysts” (system architects) acted as bottlenecks and prevented the teams from bypassing them.

- There was a conflict with the sales & marketing organization. They expected a detailed release plan with high predictability.

- The group had only one technical writer, and his availability was not enough to support the entire group.

- Lack of synchronization between the two sites led to different definitions of done and different standards of working.

All of these problems (and some more), combined with the implication of meeting the Done criteria expectedly generated a major decrease in productivity which lead to more pressure and resistance, but given the satisfaction with the pilot team, senior management supported the change and communicated to the rest of the organization which gave the teams some “learning space”.

Sprint 4 – Some Improvement

The end of Sprint 4 was considered a major milestone, At the end of this Sprint ALL of the teams were able to demonstrate done functionality, and following the Sprint Review we celebrated this success by having an ice cream party. That was sweet :)

Sprint planning was done in two parts, with the first including the fake Scrum Master/managers representing the teams, since there was still no support by senior management to introduce real support for self-organizing and the elimination of the command-and-control managers.

An overall retrospective started after Sprint 4. The teams identified that the increase in collaboration between the teams had a major contribution to the success of the last Sprint, and several improvement experiments were agreed upon:

- Focus on reducing the lab resource queue size by splitting the current lab into two testing environments. * Have a synchronization meeting for all teams twice a week.

- Create a Scrum Master community.

Although there were still major problems, in general it was visible that the atmosphere was starting to change. Team members started seeing the value in LeSS (even with fake Scrum Masters and intermediate analysts) and started expressing satisfaction with the new way of working.

One sign of improvement was that average Sprint Planning duration decreased.

The Next Sprints

As the feature-team structure, ways of working, and meetings in LeSS started becoming a habit, a new wave of challenges were becoming apparent:

| Challenges | Experiments / Solutions |

|---|---|

| Teams were improving and increasing effectiveness mostly in the coding parts (by pairing, unit testing, TDD, Test automation etc) able to complete more features in less time, the testers, which most of them had no development background, were feeling that they do not have enough work. | It became a goal of the organization to train and educate all testers with development skills. They are no longer hiring team members without development skills, and the role “tester” does not exist anymore. |

| The fake Scrum Master-managers were representing and controlling the teams, and as teams matured this was becoming an increasing problem, as it prevented self-organizing and bigger improvements.. | A lot of coaching was done with the fake Scrum Master-managers, and some of them increased their level of trust they have in the team and changed their habits. A small minority of them still insist on representing the teams and unfortunately management does not take a clear stand on this issue, probably due to the fear of them leaving the company. All this illustrates a point observed in many Scrum adoptions: introducing managers as Scrum Masters is a recipe for major problems. |

| The lack of whole-product focus also created problems of consistency between the teams. Inconsistent design and code, and even consistency in UI |

Communities started to emerge, some component mentors were nominated. Open space meeting are held from time to time. |

| Performance testing is still a separate team. | They are still not running the performance tests inside the Sprint, but they have reached a stage that all of the simulated data for the performance tests is a part of the DoD. |

| The QA manager was confused regarding her role since it was not clear what is her responsibility in the new structure. | She eventually quit her job. |

| The feature area that was assigned to the smaller site become almost obsolete and they were seriously lacking knowledge to take on new-area features. | Eventually the smaller site was closed and the entire product was developed in one site. |

| Partly because of organizational culture, teams still have very little direct communication with the end users and having a hard time clarifying requirements. | They used a variation of a common technique called “retired experts”, getting the security personnel of the company to provide feedback. While it is not a “classic” user of their product, it does help, but it still can be greatly improved. |

| As teams were learning and improving, they often wanted to implement changes to the CI system or other tools, however company regulation dictated that only the tools group can do that. | Still a huge challenge. |

| Company culture is corrosively competitive and there is a yearly employee / team / group evaluation process. Lacking a better idea on how to compare teams the senior management used velocity to compare teams. Yuck! |

While some teams are able to fake the personal evaluation process, it is still a huge challenge. |

Some of these problems are resolved by now, some partly resolved, and some still exist.

A positive observation is that this group was able to really adopt a continuous improvement and experimentation mindset, and even though things are not perfect, this product group have become a learning organization and is now regularly improving.

Much Later…

About 1.5 years after working in the organization I accidentally bumped into the person who was leading the product group. My first question to him was, “How is it going? How is it working for you?” The answer he gave was, “It’s hard, but it’s magnificent”.

Later that year I was contacted by him again, he got a new role, leading a new division and now wants to start a LeSS adoption in his new division as well. But this time, we won’t try managers as Scrum Masters ;)